I’ve been working with AI tools since 2022, when most of the world first heard the name ChatGPT. Now, it’s part of the vocabulary of anyone who uses the Internet. While I’m someone who embeds myself in this stuff, I know the vast majority of regular people don’t.

As such, you might have some ideas about AI that aren’t quite right. No problem. I don’t know anything about car engines. Nobody is expected to know everything.

The purpose of this post is to break things down for regular people who want to better understand AI, what it is and isn’t, what it can do, what it has the potential to do, and where things are going.

So let’s begin with the obvious one that popped off the second people heard the term AI.

This is not Terminator or anywhere close to it

In the Terminator movies, Skynet was a computer program that could think for itself. It was actual artificial intelligence.

ChatGPT is not that.

There is no self-awareness, no matter how many people run fake tests to get it to claim otherwise. That just isn’t how these AI chatbots work.

I once asked Google Gemini to explain itself and how it works, and it declared that LLMs (like it and ChatGPT) are essentially “predictive text on steroids.”

But that’s a little too simplistic, so let’s try again.

AI chatbots are trained on massive amounts of data. This could include everything from bicycle repair to pregnancy statistics during World War I. When these AI chatbots generate a response to a question, they use pattern recognition based on the data they were trained on.

Let’s say you ask an AI how to make waffles. To answer you, it pieces words together based on patterns it learned from that training data.

It doesn’t actually know how to make waffles, but it knows that certain words tend to follow others, so it sorts them together and throws them back to you. Since the AI has been trained on information related to your question, the response you get back is usually based on a mix of that information.

If the response is wrong, it could be because the training data is flawed, and someone trained the AI using recipes from people who also don’t know how to make waffles.

The em dash existed before ChatGPT, and it still gets used today

This is one of the more annoying things to come out of the AI age. As a writer, I often use the em dash for effect, usually to interrupt a sentence and tack something onto it, or to break a sentence and inject a side thought. Before 2022, nobody questioned it. Now, every time I use one, someone jumps out of the woodwork to claim it’s a sure sign of AI.

No.

Just because ChatGPT and other chatbots tend to overuse them does not mean that any inclusion of the em dash is a signal that the text is AI. I still use them today because they are part of the English language, and perfectly valid for use in writing. If you see every em dash as a sign of AI, that’s not my problem.

Now, does that mean you should ignore it? Well, if it shows up a dozen times in a 500-word article, there is cause to be suspicious that either it’s AI, or the author really likes em dashes. After all, AI learned how to write from humans, including bad writers.

Speaking of…

People were bad writers before AI existed

Right along with the em dash, people have also come to believe that bad writing, such as spelling or grammar errors, is a sign of AI. A few days ago, I was listening to a podcast, and the host noticed a double word in an article (the same word typed twice). He viewed that as a sign of AI, saying that blog and news authors are deliberately adding mistakes so it won’t look like AI.

While some people may be doing that to avoid AI detection, citing any mistake or typo as a sign of AI is a bit… nonsensical.

I know this may come as a shock to many people, but mistakes in writing existed before 2022. I still double-type words all the time (usually short words like “and”). It’s pretty annoying, actually, but it happens.

(Note: I did it in this post twice, but Grammarly caught them.)

I do find it funny to see this flip, though. I would watch podcasts prior to AI where hosts would see bad writing and immediately say something like “get a copy editor” or “proofread before you post.” Now, if they see bad writing or mistakes, they just assume it’s AI. So they accepted that bad writing existed before AI, but now any sign of it means it’s not a human. I don’t know how you make that kind of turn in logic without somersaulting the car carrying your theories.

Yes, AI can make hands, and a lot more

I’m surprised at how many people still don’t realize AI can make hands correctly. It’s been a thing for years now. But it can do a lot more than that.

AI images can also create realistic eyes, skin texture, and cinematic environments. A lot of AI services have popped up that make it easy to edit and expand images, making it possible to go from a portrait shot to a wide shot easily:

Image editing has become faster and easier with AI tools over the past year or so, and it’s only getting better.

Beyond images, you’ve likely seen that AI video has become pretty common. It’s no longer a mess of incoherent movements like Sora 1 clips. We can now produce high-fidelity, realistic renders. We’ve seen a lot of hilarious memes, as well as quite a few that raised some eyebrows.

Physics are also being handled well, though high motion in AI video tends to get…weird.

That said, casual motion and slower movements tend to have excellent quality with certain models and services. Visual fidelity has far exceeded what it once was, and AI is capable of generating photo-quality, lifelike images and video that can go completely unnoticed.

Datacenters are not just for AI

This website is hosted on a server in a data center in North Carolina. Facebook is hosted in lots of data centers around the world. Netflix, Google, YouTube, TikTok, and every other web thing you like are all hosted in data centers. The modern global economy runs on data centers.

I say that to point out that the call to get rid of data centers will, in fact, erase everything you know about modern life. We’ve moved so much of the world to a place that relies on the internet that taking it down would destroy entire industries and wreak havoc on how we conduct transactions, communicate, educate, or do business.

You would be forced to do the unthinkable: actually talk on the phone and pick up your own McDonald’s, Taco Bell, and Dairy Queen combo. It would be like the dark ages of 1995 all over again.

If it can be done on a computer, it can be done by a computer

We may not be at the point of total automation yet, but since you’re just there to type and move a mouse–both of which an AI can do–it’s entirely valid to say that a computer can do those things without you. How good it does those things will be the challenge.

However, while I don’t think humans are going away, it’s important to understand how much an AI can potentially do. Even right now, though, it can do a lot.

Since forever, computers have been very good at running automated tasks. For instance:

- Your phone receives notification updates from social media apps.

- Your laptop manages RAM when you have six hundred tabs open in Chrome.

- Your desktop automatically updates Windows and restarts itself, even though you told it not to, and it did it anyway because Microsoft thinks they should control what happens with the device YOU own… sorry. That’s a rant for a different day.

The point is that your devices do things on their own already. Couple that together with AI (especially AI agents), and you can task a computer to do a lot of complex work. This will, of course, lead to job losses in some areas, but job creation in others. This isn’t new, and has happened with all disruptive technologies throughout human history.

What matters is how you pivot and adapt to the disruption. If there’s one thing humans throughout history have been good at, it’s adapting to change.

You should not try to vibe code something you don’t understand

A moment ago, I talked about AI being able to create complex tasks, so it’s fitting that we jump into vibe coding now. Depending on who you talk to, vibe coding likely has a different meaning. While researching a different blog post, I came across at least 5 different definitions for vibe coding.

What a lot of people tend to settle on when defining vibe coding is that it’s a person sitting down and prompting the AI to make a thing, then prompting changes over and over again until they get what they want. So imagine this:

Person: “Create a super cool website.”

AI does it.

Person: “Now add a login system so people can log in.”

AI does it.

Person: “Now add a sign-up so they can sign up and pay me using PayPal.”

AI does it.

Person: “Now make…”

The AI will keep adding more and more code to give you a result. This is fine for some projects, but if you don’t at least have a basic understanding of the code it’s creating, you won’t know if things are being done the right way.

I use Vibe coding for certain tasks because it speeds things up. After all, AI types a lot faster than I do. But I don’t have it create code that I can’t look at and understand. I’ve had it code things and, upon inspection, realized what it did worked, but could have been done better, either from an efficiency standpoint or for future development.

When you’re vibe coding the way I mentioned above, the AI adds more code on top of existing code that wasn’t expected to support the new thing you’re adding. It’s possible that you’ll end up with a mess in the background. If you know how to understand the code, at least enough to ask questions when something seems off, you’ll have a better chance of spotting these things and correcting them, or at least seeing where you might have future issues.

AI Detectors are hugely unreliable

A lot of people like to run tweets and other text through AI detectors to see if the person posting it is using AI. Often this is done to ridicule them. The problem, of course, is that these detectors don’t work very well.

Want an example?

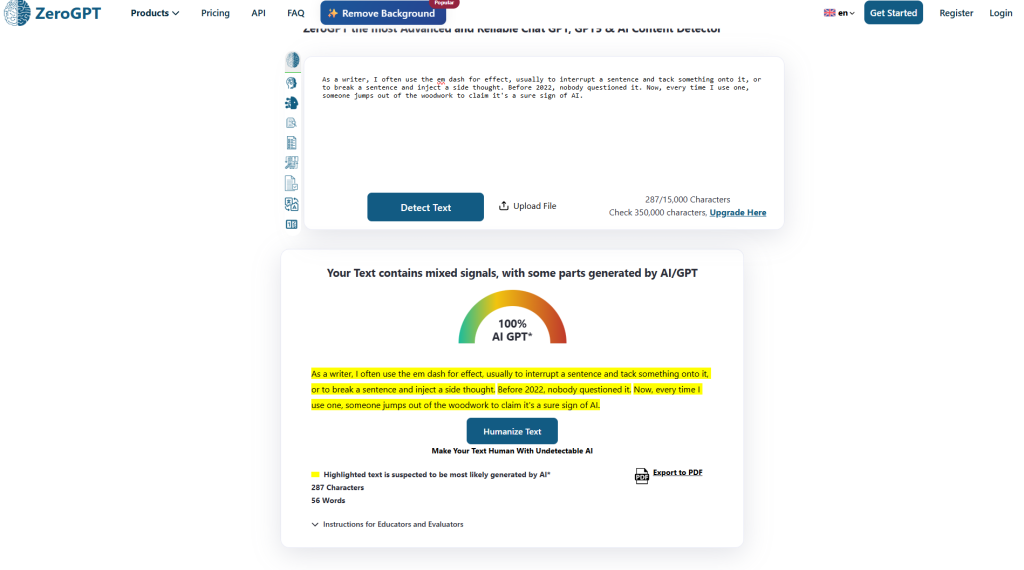

Above in this post, I wrote about my use of the em dash. I wrote every word of that myself, so it’s 100% all me. But not according to ZeroGPT, which claims to have “best-in-class performance” at detecting AI.

You can say that this is a rare case, but that’s not been my experience at all. For a while, I was running my own writing through these things to see if people might think it was AI. Almost everything I submitted came back with either fully or partially AI. It was driving me nuts.

Eventually, I realized this was a pointless endeavor because the problem wasn’t my writing; it was that these detectors are just terrible. Some are certainly better than others, but even Grammarly’s AI detection has thrown flags at me. Content I sat writing for hours in the Grammarly web editor would get flagged shortly after I typed it.

AI detection works by trying to identify patterns used by other AI tools. But there’s a big problem with that.

Tools like ChatGPT are trained on human-written content, so its responses are going to emulate the way we write. It might overdo some of our writing habits, which is what the AI detectors are looking for, but our habits–regurgitated by AI–will be detected as AI patterns.

Basically, a vicious circle.

No, Sora and other tools should not be completely free

A few months after Sora 1 was released, lots of images and videos started popping up demanding that Sora and ChatGPT be 100% free for everyone. Many claimed they “deserved” to have it for free.

No, you don’t.

There is an actual cost for generating your goofy pictures of sad cats holding a sign proclaiming your right to free stuff.

Similar costs also hit other generative AI and AI tools. These things don’t operate for free, and in some cases, there is a hefty price tag attached.

How hefty?

Reportedly, OpenAI spends $15 million a day on Sora, and recoups almost none of that through the subscriptions people have purchased.

And that’s why it’s going away this weekend.

A lot of internet services have grown people accustomed to providing service for “free,” and it’s caused a sense of entitlement among a lot of people. Things cost money, and the GPUs behind these services have a staggering cost.

To put it into perspective, some of these cards cost more than my Santa Fe when I bought it brand new a few years ago. Companies like OpenAI run tens of thousands of those. Of course they can’t afford to just give people full access to their product for free.

Whatever the mainstream media is saying about AI is usually old news or wrong

Another statement that will likely come as no shock to anyone: the mainstream media is not known for being overly factual. They’re also often late to the game with a lot of stories, especially if it’s not something they usually talk about.

For instance, do you ever hear the media call NVIDIA an AI company? They aren’t. NVIDIA is a technology company that largely deals in hardware, specifically GPUs. Until 2022, they were primarily known as a company that makes graphics cards for gaming computers. But because their cards can be used for things other than playing Fortnite, the media calls them an AI company.

I find this odd because they didn’t call them a crypto mining company when everyone was using them to mine Bitcoins.

Besides being factually wrong about very public, obvious information, the mainstream media often confuses terms or talks about things in ways that lean far too much into science fiction versions of AI than what’s actually going on. Again, this is not their wheelhouse, so they probably don’t even realize they are getting these things wrong.

And sometimes they just don’t care. I’ve heard some podcast personalities say things like “I don’t know how it works? Do you?” as if that means nobody knows how it works.

They then criticize it based on their version of how it works after telling you they don’t know how it works, which doesn’t make a lot of sense to me.

They are also behind the curve when it comes to advancements in AI. For the longest time, media and commentators in the media space didn’t recognize where image generation was really going. They thought it was just garbage images with people waving their 3 fingered hands with unrealistically blue eyes and ultra-flawless plastic skin.

The rest of us, however, knew that wasn’t all it would be. Quality and prompt adherence would inevitably get better, and we saw the potential of being able to build feature-length films with it and bypass the need for Hollywood or major movie studios altogether.

And that’s what’s happening. But it isn’t new. Regular people–unaffiliated with major studios–have been experimenting with AI video for quite a while. We’ve been using lots of open-source tools that the media has no idea exist to refine and produce works based on our own characters and ideas.

And those works are only getting better with time.

Are there problems? Sure, of course. But those problems are being solved faster than the media knows, and that’s why major corporate studios like Disney have invested heavily in AI.

You’re often sharing your content rights

I talk about this in a different blog post I’m working on, but it’s worth bringing up here. In many cases, the terms of service you didn’t read say the AI company gets certain rights to what you give them.

That includes what you type and pictures or videos you upload.

In the terms of service, they usually say something like “you grant us an irrevocable royalty-free sublicensable license.” The keywords there are “irrevocable,” which means you can’t say no later, and “sublicensable“, which means they can grant rights over your stuff to others.

Following that is often a list of what they can do with your stuff, which is typically worded in a way that doesn’t really give them actual limitations. For instance, if it says they can use it to “improve the Services,” what does that mean? It could mean anything.

Would training on your photos improve the services? Sure.

Would creating a character out of you that others can use improve the services? Probably.

Would sublicensing your likeness to Sony Pictures to make a big-budget movie with you as the main character, but due no royalties, improve the services? Definitely.

You see my point?

Before you freak out, understand that not all AI services are like this, but it’s a good idea to read the TOS and at least look for the parts that define what they can do with your content.

AI will change the landscape of most industries and how you work

This goes without saying, but I’m saying it anyway: AI will change your job.

I don’t mean the whole place will be run by ChatGPT. That’s not a great idea. There’s a reason for the “ChatGPT can make mistakes” label at the bottom of every chat.

However, these tools are getting a lot better. If you remember, this used to be the highlight of AI:

That’s an image I made with Dall-E in 2022. As you can see, it is in fact a masterpiece of elegance and sophistication that rivals the great artists of the Renaissance era.

I think I was making a cat. Or a muppet. Maybe a ferret. I have no idea what that is.

By the end of 2026, AI is going to look a lot different than it did at the end of 2025. The adoption rate, which has been steadily increasing, will also be higher.

Small businesses are using it for a wide variety of tasks, including copywriting, editing, support chatbots, marketing, research, bookkeeping, and spam filtering. It’s built into a lot of tools you use on a daily basis. It helps save time, crunch data faster, generate ideas, qualify leads, and so much more.

In other words, it’s not going away. But it will reshape how things work.

There is also the idea that eventually, AI will remove the need for people to be involved with any kind of job. I don’t think so.

First of all, manual labor jobs are never going away. We see movies like iRobot and think that’s somehow economically feasible. MIT and other groups showing off robotics tech on YouTube make people think we’re right around the corner from getting a robot plumber to come unclog your kitchen pipes.

Not remotely close.

Plumbers have to crawl through tight spaces, use tactile touch to determine whether something is rusty or damaged, and use grip strength on top of fine motor skills to work with pipes and tools. That’s very difficult to replicate with robotics, and extremely expensive. On top of that, robots and their AI brains need to understand the many nuances of each plumbing situation.

In other words, human plumbers can relax.

Second, people still need to be involved in AI processes because our brains work very differently from machines. We are more creative, think outside the box, and can sometimes spot problems in what an AI is doing better than it can. Plus, what we want isn’t always what we say we want. The AI may give us what we told it, but once the output happens, we can see what needs to be fixed.

The same is true for AI hallucinations. The AI doesn’t realize it’s hallucinating unless another AI is checking it. And if that one, too, is hallucinating or there is drifting going on, you’re going to have a big mess on your hands.

People will always need to be involved at some level. And because of that, new career roles will emerge to meet that specific need. So, it’s not as bleak as you might think.

It’s also worth noting that entire industries can be created by small teams or even individuals when they have reliable AI tools and the know-how to use them effectively. Right now, there are lots of people using AI to create small companies, side businesses, and improve their existing workflows and offerings. This adds efficiency and revenue where it otherwise wouldn’t exist.

And that’s a big point, which is why I’m harping on it so much. AI is a big deal. It has the potential to make things easier to accomplish, giving you much greater reach and ability than before. That isn’t to say it’s foolproof or 100% on point, because obviously it isn’t…

(…maybe I was making a dog…)

But many AI tools can solve a wide range of problems, speed up your workflow, and free up time. When I introduced it into my workflows, it took a bit of time to adapt and fit things together. Now, it’s a regular part of my processes for lots of things, from development to blog posting to research to random life stuff. It’s just one of many tools I’ve incorporated into my arsenal, and it’s been quite effective for me.

So while I don’t recommend asking ChatGPT to run your entire life or business, it and many other AI tools can help with a lot of things.

Leave a Reply